Question

The wavelength of the four Balmer series lines for hydrogen are found to be 410.3, 434.2, 486.3, and 656.5 nm. What average percentage difference is found between these wavelength numbers and those predicted by

? It is amazing how well a simple formula (disconnected originally from theory) could duplicate this phenomenon.

Final Answer

The average percent difference is -0.01%. Please see this Google spreadsheet for the calculations.

Solution video

OpenStax College Physics for AP® Courses, Chapter 30, Problem 24 (Problems & Exercises)

vote with a rating of

votes with an average rating of

.

Video Transcript

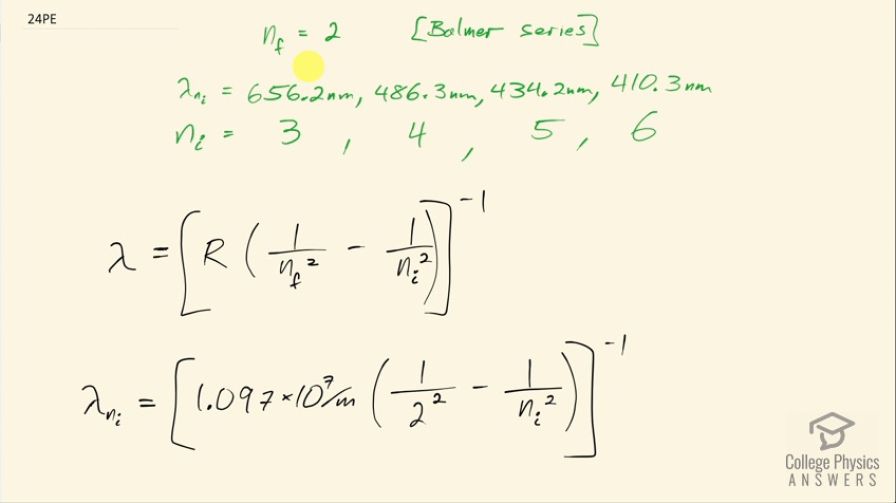

This is College Physics Answers with Shaun Dychko. The first four lines in the Balmer series for hydrogen are measured and the first four means it's the first four lowest energy or longest wavelength lines and the measurements are 656.2 nanometers, 486.3, 434.2 and 410.3 nanometers and since this is the Balmer series that means the final energy level for the electron is number 2 and that means the initial energy level then has to be 3 for the lowest energy transition which would correspond to the highest wavelength photon because the energy of a photon is hc over λ and so the lowest energy occurs for the longest wavelength. And then for the 486.3 nanometer line, you would expect the electron to begin at the fourth energy level. Okay! Now we are supposed to use this formula to compare what the formula says with what the measurements are and we are going to find the percent difference between each result and then find the average percent error. So wavelength then is the reciprocal of Rydberg's constant times 1 over the final energy level, which is 2, squared minus 1 over the initial energy level squared. So we have 1.097 times 10 to the 7 per meter times 1 over 2 squared minus 1 over whatever the starting energy level is squared all to the negative 1. So I put a spreadsheet together to figure out the calculation for each energy level— each starting energy level that is— so for a starting energy level of 3, we have Rydberg's constant multiplied by 1 over the final energy level of 2 squared minus 1 over whatever the beginning energy level is, which is this cell A2 which is the number 3 and then in the next row, we have cell A3 which is the number 4 and then so on so the only thing that's changing is the value for the starting energy level. Then we take that to the power of negative 1 to get its reciprocal and then convert it into nanometers by multiplying by 1 times 10 to the 9. So that's the formula there and then I just typed in the different measured wavelengths and then found the percent error by taking the difference between the two and then divided by the calculated wavelength and then clicked on this percent button here to format this as a percent. And then down here is the average of these percent errors— cells D2 to D5— and that is negative 0.01 percent and that's a very small average percent error for such a simple formula.